ParaView/UsersGuide/Manipulating the Pipeline

Managing the Pipeline

Data manipulation in ParaView is fairly unique because of the underlying pipeline architecture that it inherits from VTK. Each filter takes in some data and produces something new from it. Filters do not directly modify the data that is given to them and instead copy unmodified data through via reference (so as to conserve memory) and augment that with newly generated or changed data. The fact that input data is never altered in place means that unlike many visualization tools, you can apply several filtering operations in different combinations to your data during a single ParaView session. You see the result of each filter as it is applied, which helps to guide your data exploration work, and can easily display any or all intermediate pipeline outputs simultaneously.

The Pipeline Browser depicts ParaView's current visualization pipeline and allows you to easily navigate to the various readers, sources, and filters it contains. Connecting an initial data set loaded from a file or created from a ParaView source to a filter creates a two filter long visualization pipeline. The initial dataset read in becomes the input to the filter, and if needed the output of that filter can be used as the input to a subsequent filter.

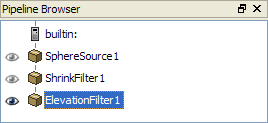

For example, suppose you create a sphere in ParaView by selecting Sphere from the Sources menu. In this example, the sphere is the initial data set. Next create a Shrink filter from the Alphabetical submenu of the Filters menu. Because the sphere source was the active filter when the shrink filter was created, the shrink filter operates on the sphere source's output. Optionally, use the Properties tab of the Object Inspector to set initial parameters of the shrink filter and then hit Apply. Next create an Elevation filter to filter the output of the shrink filter and hit Apply again. You have just created a simple three element linear pipeline in ParaView. You will now see the following in the Pipeline Browser.

Within the Pipeline Browser, to the left of each entry is an "eye" icon indicating whether that dataset is currently visible. If there is no eye icon, it means that the data produced by that filter is not compatible with the active view window. Otherwise, a dark eye icon indicates that the data set is visible. When a dataset is viewable but currently invisible, its icon is drawn in light gray. Clicking on the eye icon toggles the visibility of the corresponding data set. In the above example, all three filters are potentially visible, but only the ElevationFilter is actually being displayed. The ElevationFilter is also highlighted in blue, indicating that it is the "active" filter. Since it is the "active" filter, the Object Inspector reflects its content and the next filter created will use it as the input.

You can always change parameters of any filter in the pipeline after it has been applied. Left-click on the filter in the Pipeline Browser to make it the "active" one. The Properties, Display, and Information tabs are always reflect the "active" filter. When you make changes in the Properties tab and apply your changes, all filters beyond the changed one are automatically updated. Double-clicking the name of one of the filters causes the name to become editable, enabling you to change it to something more meaningful than the default chosen by ParaView.

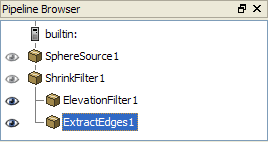

By default each filter you add to the pipeline becomes the active filter, which is handy when making linear pipelines. Branching pipeline are also very useful. The simplest way to make one is to click on some other, further upstream filter in the pipeline before you create a new filter. For example Select ShrinkFilter1 in the Pipeline Browser then apply Extract Edges from the Alphabetical submenu of the Filters menu. Now the output of the shrink filter is being used as the input to both the elevation and extract edges filters. You will see the following in the Pipeline Browser.

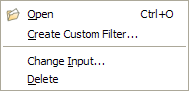

Right-clicking a filter in the Pipeline Browser' displays a context menu from which you can do several things. For reader modules you can use this to load a new data file. All modules can be saved (the filter and the parameters you've set on it) as a Custom Filter (see the last section of this chapter), or delete it (if it is at the end of the visualization pipeline). For filter modules you can also use this menu to change the input to the filter, and thus rearrange the visualization pipeline.

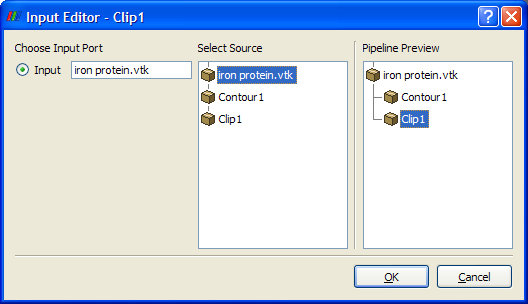

To rearrange the pipeline select Change Input from the context menu. That will bring up the Input Editor dialog box shown in Figure 5. The name of the dialog box reflect the filter that you are moving. The middle Select Source pane shows the pipeline as it stands currently and use this pane to select a filter to move the chosen filter on to. This pane only allows you to choose compatible filters. Ie, ones that produce data that can be ingested by the filter you are moving. It also does not allow you to create loops in the pipeline. Left-click to make your choice from the allowed set of filter and then the rightmost Pipeline Preview pane will show what the pipeline will look like once you commit your change. Click OK to do so or Cancel to abort the move.

Some filters require more than one input. (See the discussion of merging pipelines below). For those the leftmost input port pane of the dialog produces shows more than one port. Use that together with the Select Source pane to specify each input in turn.

Conversely, some filters produce more than one output. Thus another was to make a branching pipeline is simply to open a reader for instance that produces multiple distinct data sets. An example of this is the SLAC reader that produces both a polygonal output and a structured data field output. Whenever you have a branching pipeline keep in mind that it is important to select the proper branch on which to extend the pipeline. For example, if you want to apply a filter like the Extract Subset filter, which operates only on structured data, while SLAC reader's polygonal output is the currently active filter, until you click on the readers structured data output and make that the currently active one.

Some filters that produce multiple data sets do so in a different way. Instead of producing several fundamentally distinct data sets, they produce a single composite data set which contains many sub data sets. See the Understanding Data chapter for an explanation of composite data sets. With composite data it is usually best to treat the entire group as one entity and you do not need to do anything in particular to do so. Sometimes though, you want to operate on a particular set of sub data sets. To do that apply the Extract Block filter. This filter allows you to pick the desired sub data set(s). Next apply the filter you are actually interested in to the extract filters output. An alternative is to hit 'B' to use '[[ParaView/Users Guide/Selection|Block Selection' in a 3D View and then use the Extract Selection filter.

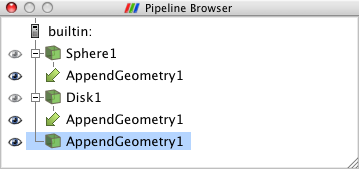

Pipelines merge as well, whenever they contain filters that take in more than one input to produce their own output (or outputs). There are in fact two different varieties of merging filters. The Append Geometry and Group Data Sets filters are examples of the first kind. These filters take in any number of fundamentally similar data sets. Append for example takes in one or more polygonal datasets and combines them into a single large polygonal data set. Group takes in a set of any type of datasets and produces a single composite dataset. To use this type of merging filter, select more than one filter within the Pipeline browser by left clicking to select the first input and then shift-left clicking to select the rest. Now create the merging filter from the Filters menu as usual. The pipeline in this case will look like the one in the following figure.

Other filters take in more than one, fundamentally different data sets. An example is the Resample with Dataset filter which takes in one data set (the Input) that represents a field in space to sample values from and another data set (the Source) to use as a set of locations in space to sample the values at. Begin this type of merge by choosing either of the two inputs as the active filter and then creating the merging filter from the Filters menu. A modified version of the Change Input dialog shown in Figure 5 results (this one that lacks a Pipeline Preview pane). Click on either of the ports listed in the Available Inputs pane and specify and input for it from the Select Input pane. Then switch to the other input in the Available Inputs port and choose the other input on the Select Input pane. When you are satisfied with your choices click OK on the dialog and then Apply on the Pipeline Browser to create the merging filter.